Last updated: 11 May 2026

The AI agent landscape is finally making sense

Everyone is telling you to use AI agents. Very few people are telling you which one, for what, or why the category even matters.

That is why the space feels noisy. A lot of people try a built-in agent mode in a chat app, don’t see much magic, and assume the whole category is overhyped. Others look at the stronger tools, decide they are too much, and do nothing at all.

The useful way to think about agents is not “which brand is best?”. It is: what job is this thing supposed to do?

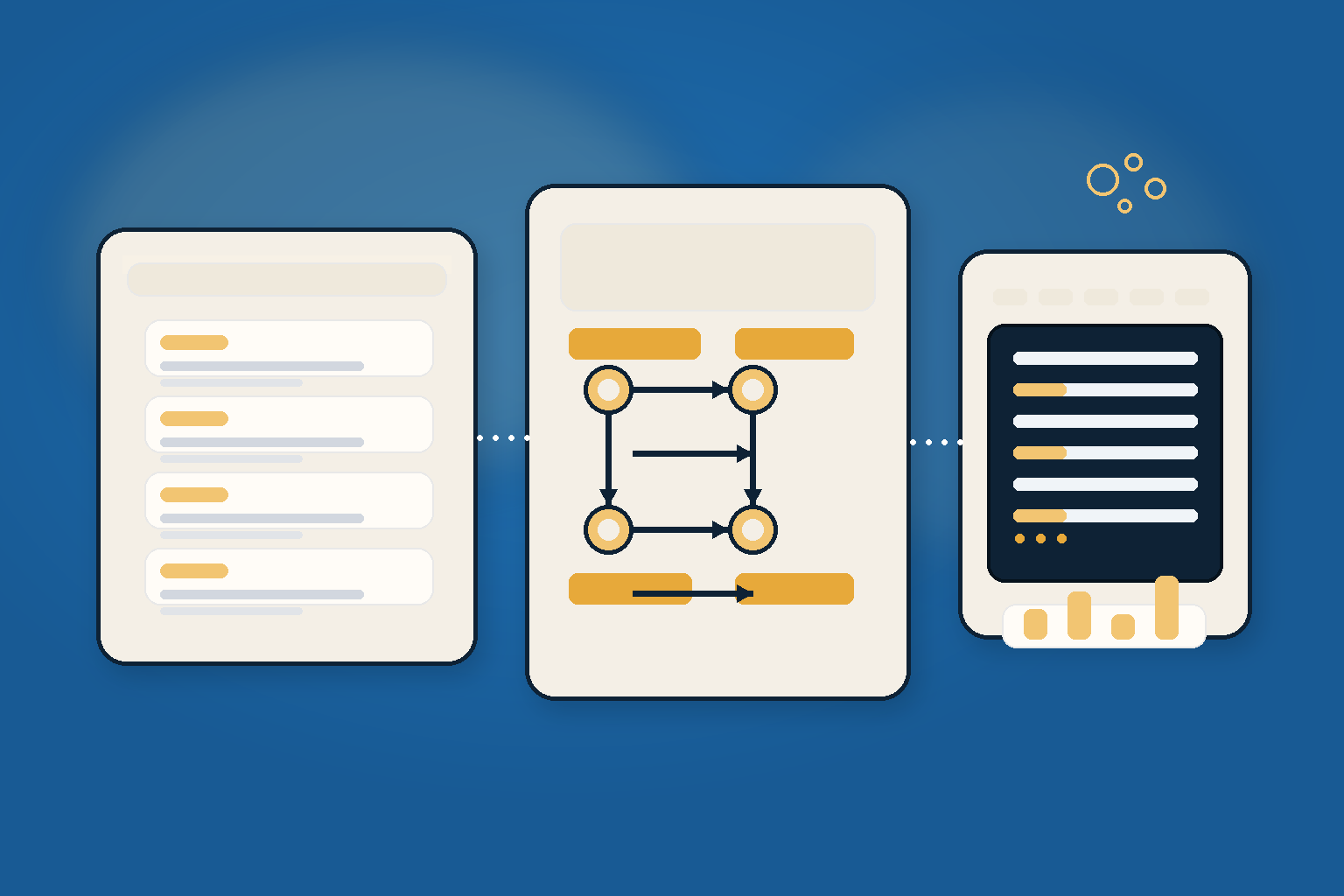

In this article I break the agent space into three practical tiers, show where each one shines, and give you a simple way to choose the right starting point without wasting a week on the wrong tool.

In this article

- Why AI agents are really about execution surface, not just intelligence

- The three tiers: personal assistants, workflow automation, and code agents

- Where Manus, Claude, Zapier, n8n and Claude Code actually fit

- A simple 5-step way to choose the right tool for your job

- Common mistakes that make agents look worse than they are

1. AI agents are not one category

The word agent gets used for everything from a chat UI with a browser button to a coding assistant that can edit files and run tests.

That is the first problem.

The second problem is that different tools are optimized for different execution surfaces:

- Browser: research, form filling, account workflows

- Files and folders: sorting, organizing, restructuring local data

- SaaS workflows: inboxes, CRM, Slack, Drive, ticketing systems

- Codebase and terminal: debugging, refactoring, testing, shipping

If you choose the wrong surface, the tool looks weak even when it is actually doing the wrong job.

Pro tip: before you pick a tool, ask one boring question: what does success look like? A PDF report, a cleaned folder, a CRM update or a working feature are four very different outputs.

2. Tier one: personal assistant tools

At the accessible end, you have tools like Manus and the Claude desktop app / Claude Code entry path. These are where most non-technical people should start.

Manus: research and structured deliverables

Manus is strongest when the task is broad, messy and research-heavy. You give it a complicated brief, it breaks the task into parts, browses, compares, synthesizes, and comes back with a structured output.

What makes that useful is not the magic word agent. It is the combination of:

- parallel research

- fresh context per subtask

- a final deliverable that is easier to review than a giant chat log

That matters when you want a real report, not just a summary that fades after 10 items.

The browser operator angle is also interesting: it can work inside your existing browser context, which is the difference between “AI chat” and “AI doing the thing in the tab you already use.”

Claude desktop app: the friendly starting point

For complete beginners, the Claude desktop app is a better on-ramp than a terminal-first setup. The Code tab gives you a gentler surface before you move into more advanced workflows.

That is a good pattern in general: start in the UI, then move down the stack once the task is clear.

OpenClaw: closer to your actual ops layer

Tools like OpenClaw go one step further. They can connect to files, email, calendar and browser workflows, then sit inside the messaging surface you already use.

That makes them powerful, but also a little more setup-heavy. If you want the easiest path, do not start here. Start here only when you already know the workflow you want to automate.

Examples where tier one shines:

- summarize 40 research pages into one decision-ready report

- turn a folder of screenshots into a clean, named archive

- scan a browser workflow and extract the relevant next steps

3. Tier two: workflow automation tools

If your task repeats, belongs to existing SaaS tools, and needs reliable handoffs, Zapier and n8n are the better fit.

Zapier: easiest entry point

Zapier is the friendliest place to start because it hides a lot of the plumbing. You describe the outcome, its AI copilot helps assemble the workflow, and you get a usable automation fast.

That makes it ideal for business ops tasks like:

- lead routing

- inbox triage

- summary generation

- CRM updates

- notification flows

The important thing is not that Zapier has AI now. It is that it gives that AI a safe runtime, retries, logs and permissioned connections to the tools you already use.

n8n: more control, more ceiling

n8n is the more technical sibling. It exposes more of the logic, which means more setup, but also more control.

That is exactly why it is better for:

- branching logic

- human-in-the-loop review steps

- self-hosted workflows

- content ops with QA gates

If Zapier is “I need this working today,” n8n is “I want this to stay flexible when the workflow gets weird.”

Concrete example: a sponsor inquiry lands in Gmail, an agent researches the company, a summary gets written to Drive, and a human gets a Slack ping only if the lead passes the filter.

That is a workflow problem, not a chat problem.

4. Tier three: Claude Code

Claude Code is in its own category.

This is not a chat assistant with coding vibes. It is a tool that can read your codebase, plan a change, edit files, run commands, test, debug and keep iterating until the thing works.

That makes it the right choice when the job is software, not research.

Anthropic’s own docs are refreshingly plain here: Claude Code can help you automate tedious work, build features, fix bugs, create commits, connect tools, and even run scheduled tasks.

A useful mental model:

- Manus = research and deliverables

- Zapier / n8n = repeatable business workflows

- Claude Code = shipping actual software

If you want to move faster with less effort, start with the tool that matches the task.

Example command: claude "write tests for the auth module, run them, and fix any failures"

That is the right shape of request. Goal first, implementation second.

5. How to choose the right tier

Here is the simplest decision path I know:

- If the task is one-off research or analysis, start with Manus.

- If the task repeats across SaaS tools, start with Zapier.

- If the task needs branching logic and control, use n8n.

- If the task changes code in a repo, use Claude Code.

- If you are still unsure, start smaller. The best agent is usually the one with the narrowest job description.

That last point matters more than it sounds.

People often start with the most powerful tool because it feels future-proof. In reality, it just makes the first week harder.

6. Before / after

Before: You describe a task in a giant prompt, copy-paste outputs between tabs, and manually clean up the result.

After: You pick the right tier, give it one clear job, and let it own the execution surface.

That is the actual productivity jump. Not more prompting. Less friction.

7. Common mistakes that make agents look worse than they are

Mistake 1: using a code agent for workflow automation

If the task is “whenever this email arrives, do X,” do not force a coding agent to play SaaS glue.

Mistake 2: starting with the hardest setup

A browser-connected personal ops agent is cool. It is also the wrong place to begin if you have not yet proven the workflow.

Mistake 3: letting context bloat

Long threads make everything slower and fuzzier. If the task is getting fuzzy, reset the context or split the work.

Mistake 4: confusing demos with durable systems

A flashy demo is not the same as a workflow that survives Monday morning.

Symptom → cause → fix:

- It feels random → too much scope in one prompt → split the job

- It misses details → wrong execution surface → move to the right tier

- It keeps looping → no clear success criteria → define the output first

8. Tier four: operational agent layers

Tools like OpenClaw, Hermes and similar workspace-native agent layers sit one level closer to real operations.

They are not just chat tools and not just workflow automation. They connect to the surfaces you actually use: files, browser, messages, calendar, repos and approvals.

Why this tier matters

This is the layer you want when the task crosses multiple surfaces and needs some memory, guardrails and human oversight.

Typical strengths:

- closer to your real work than a generic chat agent

- useful for multi-step operations across browser, files and messaging

- better fit for custom guardrails and repeatable ops

- can keep context across sessions instead of starting from zero every time

Typical tradeoffs:

- more setup and maintenance than simpler tools

- more permission and context complexity

- easier to over-engineer

- not as good for one-off tasks as a lightweight assistant

In short: great leverage, but only if you already know the workflow you want to own.

9. Ask OpenClaw directly

If you already have a setup and want to make it better, ask OpenClaw for a blunt review.

A tiny command toolbox helps here:

openclaw statusto see whether the agent stack is actually healthy/statusinside the active session when you want the live runtime view/compactwhen the thread gets bloated and you want to keep moving

Try questions like:

- What should I automate with Zapier or n8n instead of using a chat agent?

- Which part of my workflow belongs in Claude Code?

- What is the biggest context or token sink in my current setup?

- If you were starting from scratch, what would you build first?

That is where the real leverage shows up: not in the category names, but in choosing the right one for your actual problem.

Conclusion

The agent landscape is not chaos anymore. It is a stack.

Once you stop asking “which agent is best?” and start asking “which job am I trying to automate?”, the options get much clearer:

- Manus for research

- Zapier or n8n for workflows

- Claude Code for software

- OpenClaw, Hermes and similar layers for ops-level orchestration

Pick the smallest tool that can own the job. That is usually the fastest path to something that actually works.

Further reading

- OpenClaw in 30 minutes

- What is a harness?

- Claude Code docs: https://code.claude.com/docs

- Zapier AI: https://zapier.com/ai

- n8n Advanced AI docs: https://docs.n8n.io/advanced-ai/

- Manus Wide Research: https://manus.im/features/wide-research

- Manus Browser Operator: https://manus.im/features/manus-browser-operator